How to create an effective Data Engineering Strategy for Enterprises

3AI November 14, 2019

“Enterprise Data Engineering” may sound dated and not so cutting-edge buzzword now. But in this age of several disruptive ideas, concepts and technologies, Enterprise Data Engineering has caught-up with all the necessary advancements and capabilities equally if not better. With organizations becoming more and more keen to be data driven and embrace AI, there is heightened necessity of robust Enterprise Data Engineering Strategy (EDE Strategy). Organizations that do not have efficient EDE Strategy laid out would be ignoring or mismanaging their data asset. Inefficient use of data asset and insights would weaken the competitive edge and their competitors will leap ahead multi-fold in no-time.

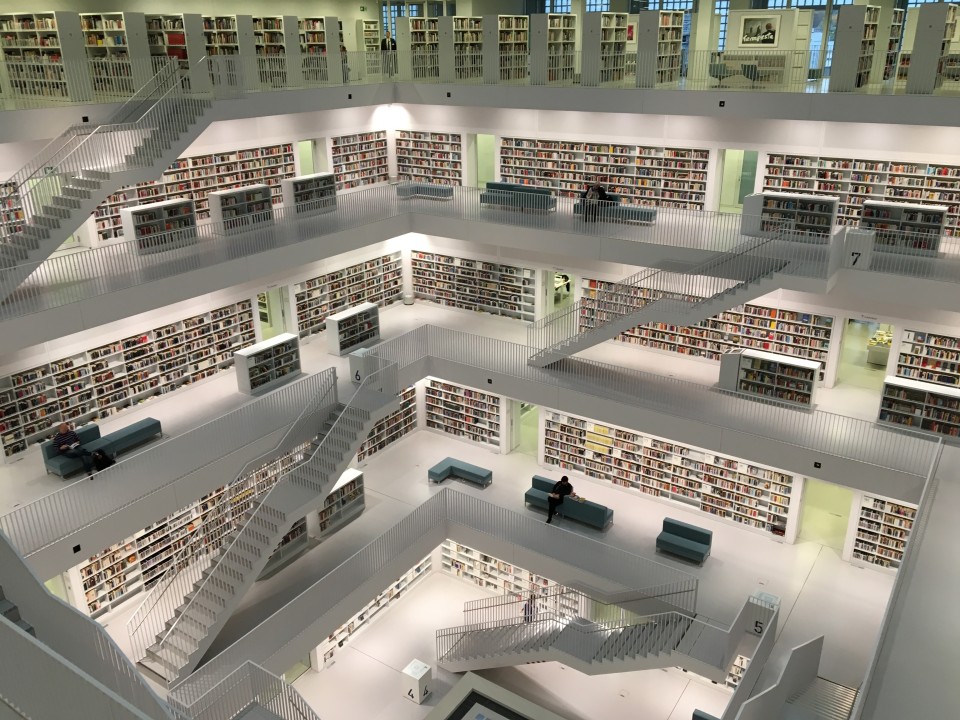

EDE Strategy being the master plan of enterprise wide data infrastructure, is a foundational component for any meaningful corporate initiative in recent years. “Explosive growth of data” is being identified as a challenge, an opportunity, a new trend, a significant asset, a source of immense insights, etc. Social media, connected devices and detailed transaction logging are generating huge stream of data which simply can’t be ignored. In simple terms, a panoramic view and wholesome control of a data setup is essential to make data a powerhouse for organizational strategic growth.

Global IP traffic annual run rate is projected to reach 3.3 zettabytes by 2021 (TV & Smartphones together will be accounted for more than 60% of this IP traffic) -CISCO

Along with changing landscape of data, associated disciplines are now aligning to the new demands. EDE Strategy ensures that, these alignments are in line with the roadmap and caters to business users and their requirements.

EDE Strategy encompasses multiple strategy elements that are seeking increased attention now-a-days due to multiple advancements in & around them. Let me introduce some of those strategy elements here:

Automated Data Ingestion & Enrichment

Data Ingestion has now become seriously challenging due to variety & number of sources (social media, new devices, IoT, etc.), new types of data (text stream, video, images, voice, etc), volume of data and the increasing demand for immediately consumable data. Data Ingestion & Enrichment are almost coming together in several scenarios. Due to this, Data Ingestion is almost going off the ETL tools and is fast adopting Pythons and Sparks. Streamed data is not just about absorbing the data as it happens at the source. It is also about enabling AI within the ingestion pipeline to perform data enrichment by bringing together needed data sets (internal & external) and making the Ingestion-to-Insight transformation automatically in seconds/minutes.

ML/AI Models and Algorithms

ML/AI infuses smartness in building data objects, tables, views and models to oversee the data flow across data infrastructure. It uses intelligence in identifying data types/keys/join paths, find & fix data quality issues, identify relationships, identify required data sets to be imported, derive insights, etc. So, the advent of ML/AI in data engineering infuses intelligence into learning, adjusting, alerting and recommending by leaving complex tasks & administration to humans.

Cloud Strategy

The most significant shift seen in the digital world recently is the amount of data being generated and transported. Studies say, 90% of the data existing today were generated in just last 2 years. This is going to increase multi-fold in the coming years. The on-prem based infrastructure and provisioning processes aren’t agile enough to scale rapidly on demand. Even if this is managed, the associated overhead of buying, managing and securing the infrastructure becomes highly expensive and error prone. So, it is essential for organizations to opt for highly efficient and intelligent data platforms on cloud. Cloud offers several advantages across cost, speed, scale, performance, reliability and security. It is also maturing away from initial IaaS into newer services and players. But it doesn’t mean organizations simply initiate the cloud migration and get it done at the press of a button. There should be a carefully drafted Cloud strategy & execution roadmap for adopting cloud in alignment to organization requirements and constraints. Data and information on cloud has the potential to give organizations the flexibility, scalability and ability to discover powerful insights. Cloud also enables applying ML/AI for discovering dark data, monetizing opportunities and disruptive business insights.

Data Lake

Among the data-management technologies most significant space is of data lake. Data Lake is not a specific technology but a concept of housing “one source of truth” data for an organization. When implemented, data lakes can hold and process both structured and unstructured data. Though name indicates huge infrastructure, data lakes are less costly to operate if on cloud. It doesn’t require data to be indexed or prepared to fit specific storage requirements. Instead it holds data in their native formats. Data is then accessed, formatted or reconfigured when needed. Though data lakes are easy to initiate due to easily accessible and affordable cloud offerings, it requires careful planning and incremental adoption model for large scale implementations. In addition, the ever-changing data regulatory & compliance standards add to challenges of implementing and managing data lakes.

Master Data Management (MDM)

Though there is ongoing debate on whether MDM is needed where data lake is the central theme. Schema-on-Write, Schema-on-Read, unstructured data, cloud, etc., are the main contention points in these debates. No matter who wins these debates, it is important for us to know more about MDM while discussing on data engineering. Because, MDM is very essential for organizations to serve their customers/clients near real-time and with better efficiency. As Master Data is the key reference for transactions, typically all independent applications maintain them locally. This leads to redundancy, inconsistency and inefficiency when these data are brought together. It becomes a big challenge while integrating and processing these data due to complexity, chances for errors and increased cost. So, it is important to address MDM element in the EDE strategy carefully by considering organization objectives. Multiple models are practiced for implementing MDM like, registry, hybrid, hub, repository, coexistence, consolidation, etc., which would be discussed in my next articles.

Visualization

Visualization is an exercise that helps in understanding the data in a visual context like patterns, trends, relations, etc. This sounds like an external element or a client to the data engineering. Then, why is Virtualization an important element in EDE Strategy?

Gone are the days of graphs and charts for human analysis. Especially due to data deluge, fitting so much information in a graph or chart is almost impossible for human-beings. They need help in building meaningful representations by consuming huge amount of data. ML/AI is the answer to this wherein its models/algorithms show patterns and correlations by studying huge data-sets in no-time. So, to enable AI to process data, it is important to arrange and label the data suiting it the best. Hence, Visualization is an important aspect to be considered while constructing an Enterprise wide Data Engineering Strategy.

Conclusion

In this new era of data being the new oil, every interest required is being taken to improve the way data is received, cleansed, enriched, assembled and transformed. EDE Strategy ensures establishing effective deployment/management guidelines and continuously improves them because data environments are living organisms. A solid EDE Strategy is highly essential to cater to the demands of this new age. All organizations must have EDE Strategy for realizing their digital vision. Ignoring to have a well laid Enterprise Data Engineering Strategy is as good as regressing in this competitive world.