Genomics Reimagined by Big Data & Analytics

3AI October 22, 2020

Today we will explore the application and inference driven from the path breaking data science approach from big data analytics in the field of Genomics. Research and data sciences driven approach in this field lays emphasis on sequencing technology which has resulted in the dramatic increase of sequencing data, which, in turn, requires efficient management of computational resources, such as computing time, memory requirements as well as prototyping of computational pipelines. I will be exploring these new techniques and solution oriented approaches in this blog entry.

Genomics broadly relates, among other things, to study of Genetic Material. It involves processes like sequencing, mapping, and analysis of RNA and DNA codes of a large variety of living beings. The recent years of rapid advancements in Life Science & Healthcare has seen a lot of focus on determining the entire DNA sequence of humans to understand the genetic features of the human body. The primary objective of this research is to find out the relation between genes and heredity which is going to be instrumental in a much effective and conclusive disease prevention and cure.

Just like any basic problem statement, let’s see how we can bring a definite structure to this problem utilizing a solution driven approach. The vast amount of data generated from genome sequencing, genome mapping, and analysis has made it critical for the scientific field to adopt Big Data. The data that is created due to Genomics is huge, with each human genome having 20,000-25,000 genes, each comprising of 3 million base pairs. Thus an average human genome data could amount to around 100 Gigabytes. Thus Genomics Study, in general would be dealing with petabytes of data with data addition increasing in an exponential fashion as we dwell more into it to find the answers of human health.

Big Data Analytics becomes critical in Genomics because of its ability to store, transform and analyze large amounts of genomic information which can unearth highly valuable medical insights for disease prevention and cure.

The Volume of Analytics

The Genome Wide Association Studies (GWAS) who is at the forefront to Genomics are using multiple Big Data & Analytics models to conduct research based on exploring the connections between genes and diseases. There have been more than 1600 genome studies which have established distinct connection between 2000 genes and more than 400 common human disease symptoms. Some of the applications that GWAS is engaging are:

- Predictive models to identify “high-risk” patients associated to a certain type of diseases like Type-1 Diabetes.

- Disease Subtypes Classification for guided clinical trials or highly targeted treatments of diseases like cancer.

- High-quality information processing used for filtering out drug candidates for toxicity and efficacy conditions before they can stand clinical trials.

Analytics Application Avenues in Genomics

Genomics covers number of biomedical processes, each requiring vast amount of data processing, analysis and Big Data storage and manipulation. These can be broadly categorized to the following, among other points:

- DNA Sequencing Library: While the actual process of DNA Sequencing involves separating pieces of DNA according to their length through a process of electrophoresis, the Sequencing System needs to maintain a vast universal library for sequencing any DNA sample from a virus to a bacterium to a human. This library would contain every possible sequence applicable to any sample being tested. Keeping such a huge archive of DNA sequences calls for Big Data Analytics Systems.

- Annotation: It simply refers to adding a note by way of explanation or commentary. In Genomics, annotation process involves marking a description of an individual gene and its protein (or RNA) product. The focus of each such record is the function assigned to the gene product. Complex Automated scripts using Decision Analytics are used to determine how to assign gene functions. Though some aspects need to be manually performed, advancements in Decision Analytics in the future will witness fully automated Genomic Annotation.

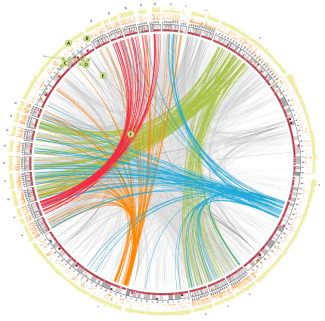

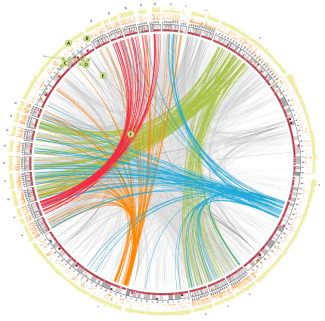

- Genomic Comparisons: Comparing genomes involves aligning billions of DNA reads to a genome and finding out the likelihood of similarities between random sequences. This requires systems that can handle Big Sequence Data, and complex correlation algorithms.

- Genomic Visualization: Genomic Browsing tools are required to display complex correlations and vast options for customization.

5. Synteny: A process involving assessing two or more genomic regions to deduce if it comes from a single ancestral genomic region. This has similar system requirements to Genomic Comparisons basing on complex statistical correlation algorithms.

The Benefits of Big Data Analytics in Genome Study

Genome Sequencing Cost reduction

Just a few years ago, the cost of human genome sequencing was around $100 million. Today it may be less than $50 million. This downward trend is only going to continue in the coming years with stronger and wider adoption of Big Data. Both the research community and the Healthcare Industry have been working towards making genome sequencing affordable and accessible to the general public. Today, an individual human genome sequencing costs only around $5000.

Time Saving

In a traditional setup, with vast amount of data stored in databases, tests that use extract, transform, load (ETL) would take an incredibly long time with that much data. With Big Data solution like Hadoop, there is no ETL. Hence analysis of data is relatively very quick, which would save on a lot of time. Onset of newer and more widely ‘being adopted’ tools like Spark and Python based systems enable a steady handshake and easier time to market solutions to work on this Big Data with far greater results and possibilities.

Better Analysis

Big Data Systems like Hadoop allows us options to perform very custom analysis that would not be possible in a traditional business intelligence tool, neither would it work for an SQL relational type setup.

Challenges in the Current Snapshot

Data Storage Costs

The Big Data generation and acquisition create vast challenges for storage, transfer and security of information. It may now be less costly to create data than it is to store it. As an example, the NCBI which has been a frontier of Big Data efforts in biomedical science going all the way since 1988 hasn’t been able to come up with a comprehensive, safe & inexpensive solution to the data storage problem.

Adoption of Big Data Techniques

There is always a gap between implementing or envisioning Big Data overall when it comes to Data Sciences. The challenge is to demystify the complex Big Data problem into smaller more achievable data problems and solutions. The most critical adoption hurdle is the cost to benefit analysis, which works out well in your favor, as a researcher only when you can get to the basics of a business problem and stich it together with the intent to bring out a quantifiable RoI churn.

Large Initial Investment

Big Data management involving large investment makes it beyond the reach of small laboratories or institutions. This poses a big challenge in conducting extensive and parallel biomedical research.

Big Data Transfer

Another challenge is to transfer data from one place to another. At the current setup, it is mainly performed by external hard disks through postal service. An alternative to it could be the use of Biotorrents for data transfer, which will allow sharing of scientific data using a peer-to-peer file sharing technology. Torrents which were primarily developed to facilitate transfer of large amounts of data over the internet could be applied to biomedical research.

Security & Privacy

Security and privacy of data from individuals is also a concern. Possible solution of implementing advanced encryption algorithms like those implemented in banking in the financial sector to secure client data could be a valid workaround.